We propose RAFT (Reinforcement from Agent Failure Tasks), turning tool-use agent failed trajectories into targeted executable tasks and building an error-driven RL pipeline, achieving 82.5 Pass^1 on Tau2-Bench Retail.

Method

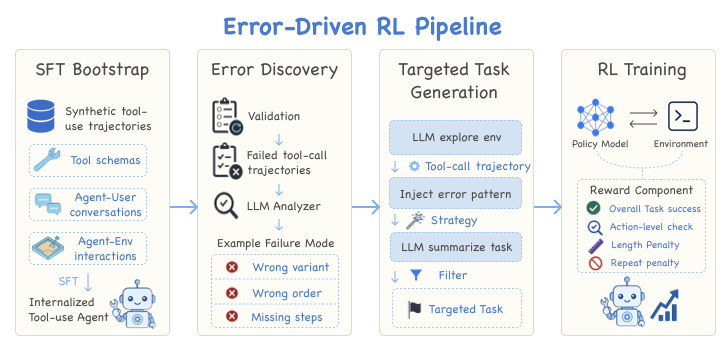

We present RAFT (Reinforcement from Agent Failure Tasks), an error-driven RL pipeline that converts failed tool-use trajectories into targeted executable tasks and trains the agent to correct its own mistakes.

Figure 1: The RAFT pipeline. Failed agent trajectories are analyzed, decomposed into executable subtasks, and fed back as RL training signal.

Results

Error-driven RL consistently improves over both the base model and the SFT baseline across all Pass^k metrics on Tau2-Bench Retail, with the largest gains visible at stricter consistency thresholds (Pass^3, Pass^4).

Pass^k results on Tau2-Bench Retail (Qwen3-30B-A3B-Thinking-2507). RAFT (SFT + RL) consistently outperforms both the base model and SFT baseline. Higher is better; Pass^k is the probability that all k independent trials succeed.